On March 30 and 31, 2025, Anthropic unintentionally exposed over 512,000 lines of TypeScript code from its Claude Code AI model through an npm source map file, a supply chain misstep that offers a rare window into the company's roadmap while underscoring a vulnerability that could ripple across any organization publishing JavaScript packages.

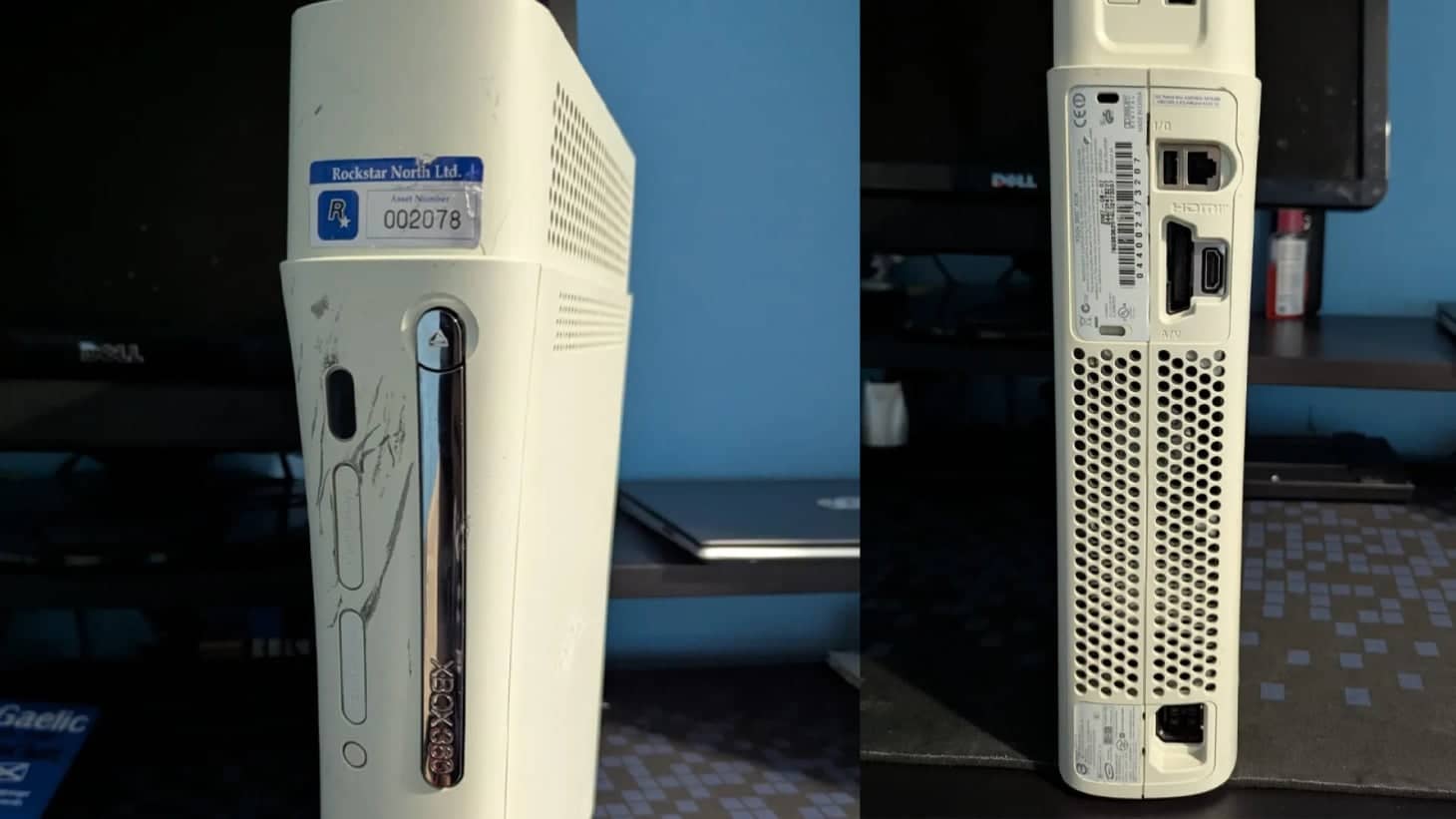

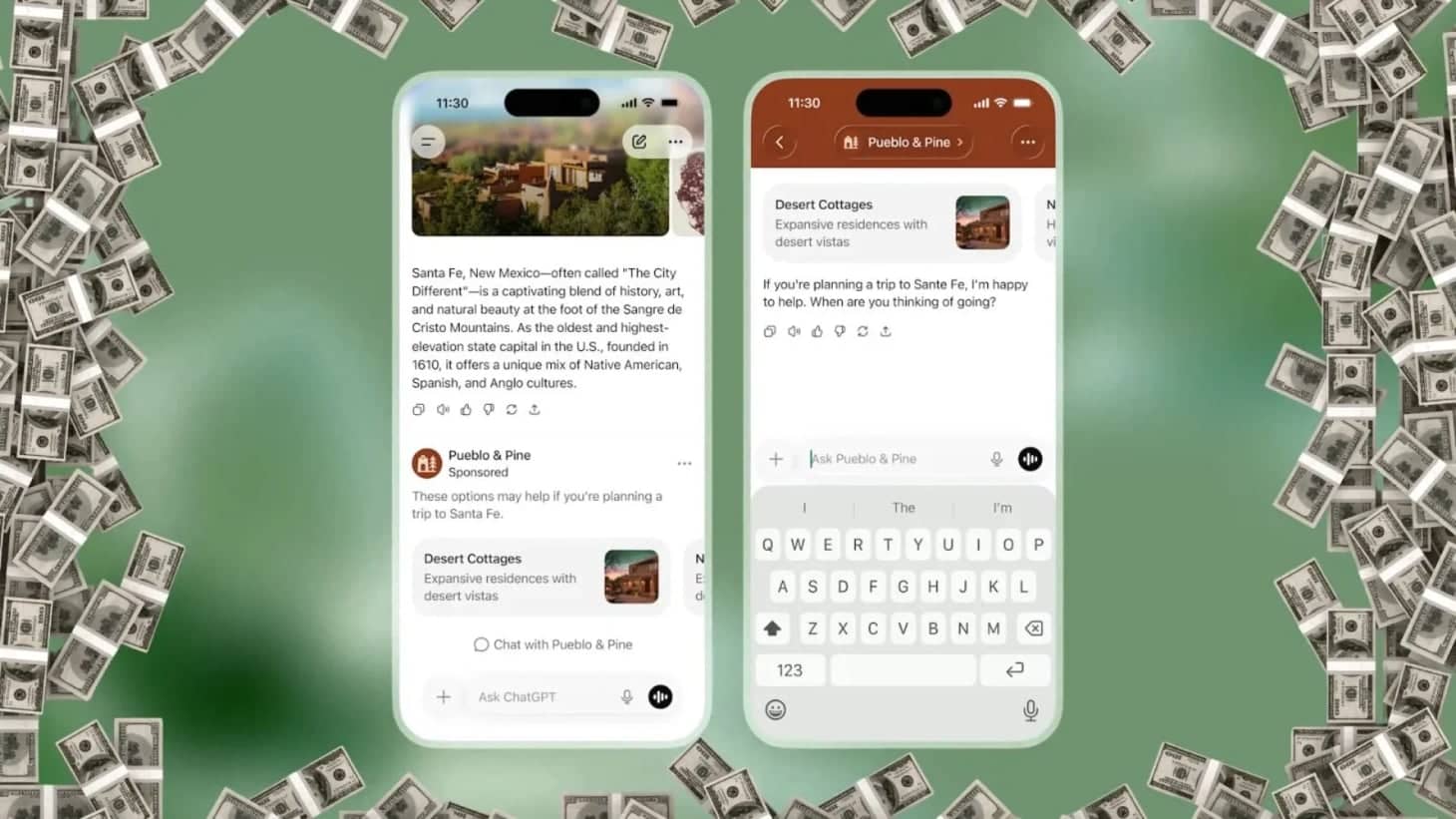

Why it matters: The exposure reveals previously undisclosed models (Opus 4.7, Sonnet 4.8, and a prototype named Capybara) alongside internal features such as a stealth "Cover Mode," a long term memory system called KAIROS, and even a virtual pet named BUDDY. More broadly, it highlights a systemic weakness in the software supply chain at a moment when credential leaks are accelerating at an unprecedented rate.

What they're saying: Anthropic has not issued a public statement. Industry analysts, however, note that the leak arrived during a broader surge in credential exposure across public code. GitGuardian's 5th edition State of Secrets Sprawl report, released on March 17, 2025, documented 28.65 million new hardcoded secrets in public GitHub commits during 2024 and an 81 percent year over year increase in AI service credential leaks.

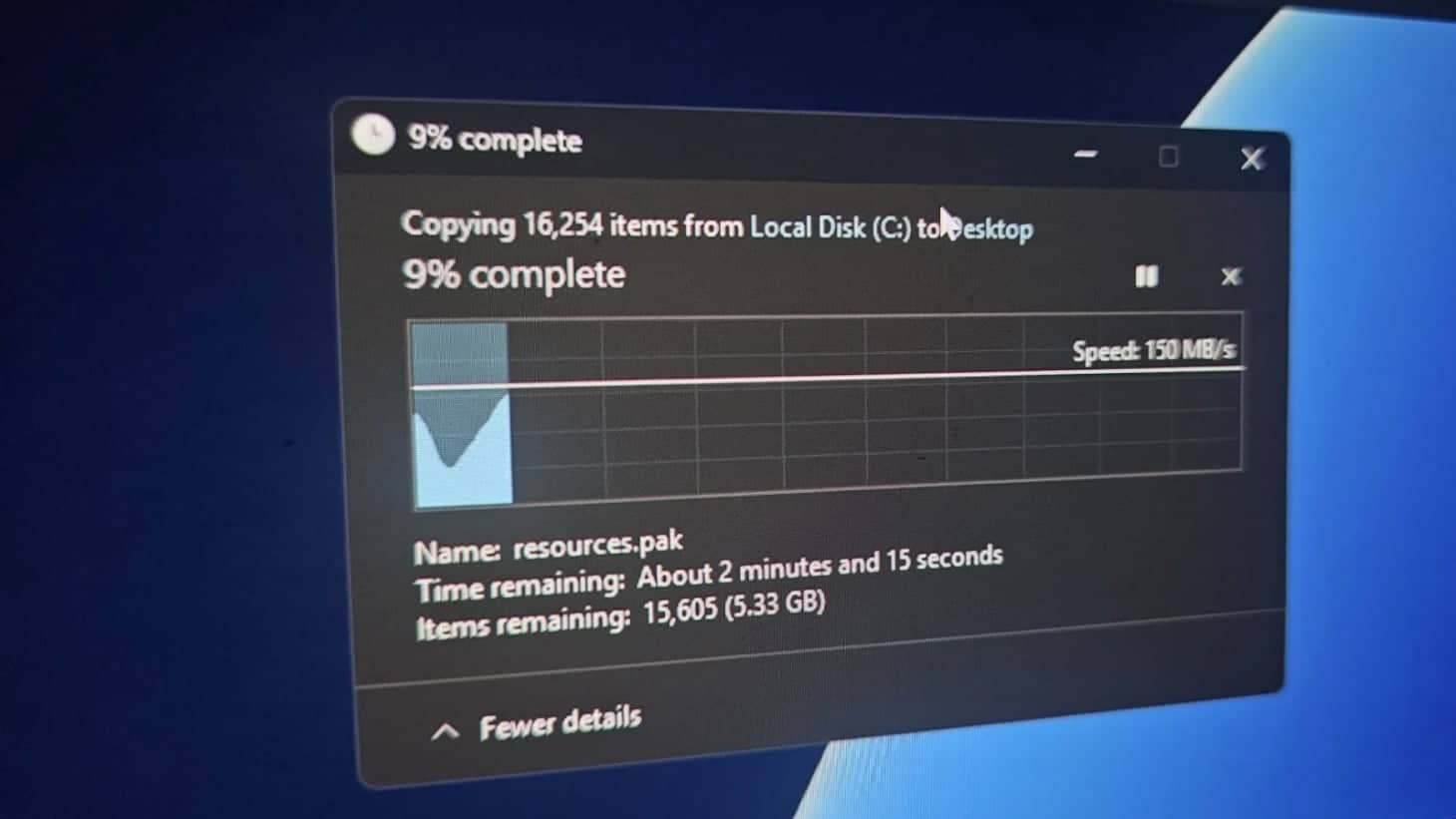

Source map files translate minified JavaScript back to original source code, enabling debugging. When published publicly, they can be reverse engineered to reconstruct the original codebase.

Technical details of the exposure: The npm package version 2.1.88 included a .map file weighing 59.8 MB and covering roughly 1,900 files. Researchers extracted approximately 512,000 lines of TypeScript, revealing internal modules such as "Cover Mode" for stealth code contribution, the long term memory system KAIROS, a virtual pet named BUDDY, and a profanity based frustration tracker designed to gauge developer sentiment.

The pattern beneath the numbers: GitGuardian data shows commits co-authored by Claude Code exhibited a 3.2 percent secret leak rate, more than double the 1.5 percent baseline for all public commits. With public GitHub activity reaching 1.94 billion commits in 2024, a 43 percent year over year rise, the scale of the supply chain risk is no longer theoretical. It is structural.

Comparison with other major tech leaks: The 2024 Google Search documentation leak disclosed 350,000 lines of indexing code, while the Claude leak reveals 512,000 lines of active model logic and future model references, making the latter broader in scope and more directly tied to AI product roadmaps.

What's next: Experts warn that internal repositories are approximately six times more likely to contain hardcoded secrets, and 28 percent of leaks now originate from collaboration tools such as Slack, Jira, and Confluence. Moreover, 64 percent of valid secrets detected in 2022 remained active when re-tested in January 2025, indicating a persistent remediation gap that organizations have yet to close.

Recommended security measures for AI labs:

- Implement automated scanning of npm packages for accidental source map inclusion before publishing.

- Adopt secret management tools that rotate credentials on each commit.

- Enforce strict code review policies that flag hardcoded keys in both public and private repositories.

- Conduct regular penetration tests of the software supply chain, focusing on third party dependencies.

- Provide developer training on secure packaging practices and the risks of source map exposure.

- Integrate real time alerts for anomalous commit patterns using services like GitGuardian.

- Maintain an incident response playbook specific to supply chain breaches.

For a deeper look at Anthropic's model roadmap, see our earlier coverage of Claude Opus 4.5 for autonomous coding. The Claude Code leak underscores the urgent need for robust software supply chain security as AI development accelerates and the stakes of accidental exposure continue to rise.