Cold-start recommendation click-through rates jump 2.3 percent when multimodal embeddings replace text-only models, according to a 2024 VK benchmark. That gain translates into millions of extra views for platforms serving billions of videos daily. Multimodal AI, which fuses visual, audio, and textual signals, powers cross-format discovery across YouTube Shorts, Instagram Reels, TikTok, and VK Clips.

Why single-modality models miss the mark

Classic pipelines treat each signal as a separate silo. A text model reads captions. A vision model scans frames. An audio model processes sound. When a video titled "how to pack for winter hiking" appears, the text model matches the words "pack" and "winter," but it cannot tell whether the footage shows snow-capped peaks or a bedroom closet. The result is a recommendation that feels generic and often irrelevant.

Industry data show that platforms relying on single-modality signals see recommendation click-through rates plateau around 5 percent after the first year of growth. The limitation matters because streaming services report that 70 to 80 percent of consumption comes from personalized feeds. When the feed fails to capture the full meaning of content, user satisfaction drops and churn rises.

How contrastive learning builds a shared meaning space

Contrastive learning teaches a model to pull matching signals together and push mismatched ones apart. During training, the system receives paired inputs. A video frame and its spoken description form one pair. The system also sees random mismatched pairs, such as a frame of a desert with a soundtrack about city traffic.

The loss function rewards the model for reducing the distance between matching pairs. Those pairs exist in a high-dimensional vector called an embedding. Mismatches receive the opposite treatment: the model increases the distance between unrelated content.

An embedding is a numeric fingerprint that locates content in a semantic space. Videos about mountain trails cluster near each other. Cooking tutorials form a separate region. Once content is represented this way, it can be compared across formats and modalities.

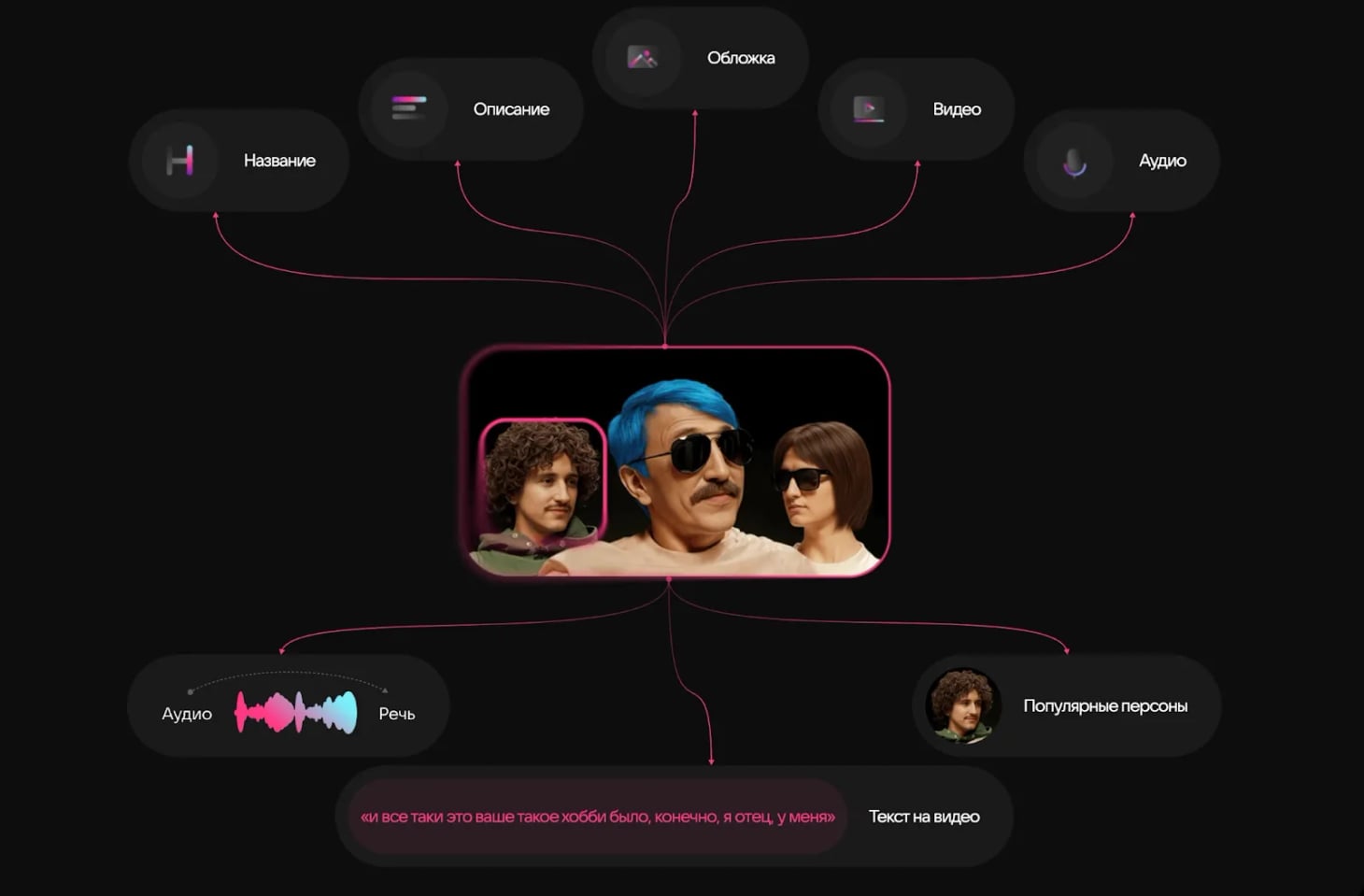

OpenAI's CLIP paper introduced large-scale contrastive image-text pre-training and validates this approach. VK's production pipeline extends the same principle to video, audio, and text. The system generates embeddings in real time for every upload.

What users gain from unified content understanding

Unified embeddings let platforms search by example instead of keywords. A user uploads a photo of a misty mountain. The system retrieves videos that share visual texture, ambient sound, and even the emotional tone of the image. Another user hums a melody. The model matches it to clips with similar acoustic patterns. The result is a discovery experience that feels intuitive rather than forced.

Metrics from recent A/B tests show that adding multimodal embeddings lifts recommendation click-through rates by 1 to 3 percentage points. Average watch time increases by several percent. Those gains matter because they directly impact revenue for ad-supported services and affect subscription retention for premium platforms.

Cross-platform recommendations in action

Semantic links bridge formats across a company's ecosystem. A reader finishes an article about sustainable travel on VK News. Moments later, a short video about eco-lodges in the Altai mountains appears in the VK Clips feed. The recommendation engine recognizes that both pieces discuss eco-lodging despite one being text-heavy and the other visual.

The system encodes meaning rather than surface keywords, so it can surface content that users are likely to enjoy even if they never explicitly searched for it. This cross-format flow keeps users engaged longer and reduces the need to switch apps. Similar patterns appear in U.S. platforms where Instagram Reels surfaces content related to articles saved in Facebook, or YouTube Shorts recommends videos aligned with long-form watch history.

When algorithms explain their choices

Transparency demands that platforms surface the reason behind a recommendation. Future interfaces may display a badge: "We suggested this video because it shares visual scenery with articles you read." Such explanations build trust and give users a way to provide feedback on relevance.

Bias mitigation teams at VK monitor embedding clusters for over-representation of any demographic. When a cluster skews toward Western business attire in a "professional clothing" query, engineers rebalance training data and adjust the contrastive loss to promote diversity. YouTube and TikTok apply similar monitoring to detect when recommendation clusters fail to represent the full range of user communities.

What comes next for multimodal AI

Next-generation models will not only rank existing content but also generate new media. Imagine a system that scans a user's watch history, extracts recurring visual themes, and automatically assembles a highlight reel. Early prototypes already exist in niche platforms. Widespread adoption hinges on lower compute costs and stronger user trust.

As compute becomes cheaper and standards for explainability solidify, multimodal embeddings will become the default backbone for search, recommendation, and moderation across the internet. Users can expect more transparent controls: toggles to adjust how much weight the system gives to visual versus textual signals, or opt-in explanations that show which past interactions influenced each recommendation. The technology is ready. The challenge now is to deploy it with bias checks, transparent explanations, and user-centered controls that put choice back in the hands of the people who consume the content.