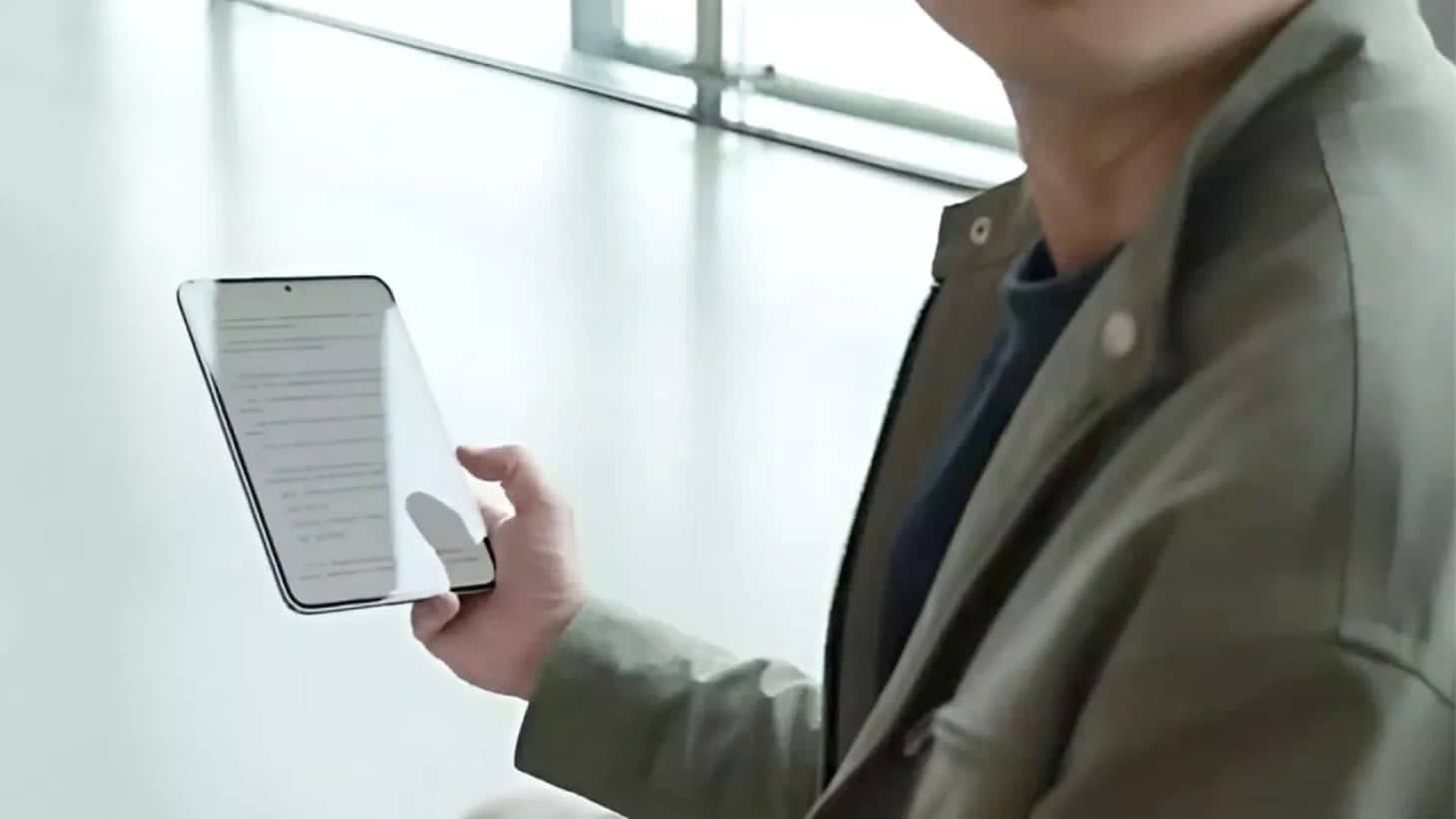

Google unveiled an AI avatar tool for YouTube Shorts on May 15, granting creators 18 and older the ability to generate digital replicas of themselves. The feature allows users to create personalized avatars that can appear in their videos, offering a new approach to content creation on the platform.

The system records facial movements and voice patterns, then renders a lifelike avatar that can be inserted into new or existing Shorts. Users provide the input, and the AI model outputs a digital replacement. The avatar functions as both mirror and mask: realistic enough to convince viewers, while flexible enough to be deployed across multiple videos.

The tool launches as YouTube ramps up its Shorts push, offering creators a low-cost production alternative that eliminates the need for constant on-camera appearances. The rollout also follows the recent shutdown of OpenAI's Sora app, which had offered similar AI video creation functionality before being pulled from circulation.

Google has confirmed the rollout will be gradual, with access restricted to creators using avatars exclusively in their own content. The company imposed three key safeguards: a minimum age requirement of 18, automatic deletion of avatars after three years of inactivity, and mandatory Synth ID labeling on all AI-generated Shorts.

The labeling requirement serves a critical purpose. Every video produced with an avatar must carry an AI-generated tag, a clear signal to viewers that what they're watching is synthetic, even if the voice and face belong to a real person.

By design, the tool lowers production barriers for creators. But it also introduces a new variable into the trust equation. When a creator's face becomes infinitely reproducible, the boundary between authentic self-expression and AI-assisted performance begins to blur.

For U.S. creators, this opens creative possibilities: voiceovers without recording sessions, on-screen presence without cameras, and the ability to produce content more efficiently. It also raises questions about what audiences are consenting to when they engage with content that looks human but is assembled by AI algorithms.

YouTube plans to monitor adoption patterns and user feedback, with potential expansion of the feature later in the year. Meanwhile, regulators are tracking AI-generated content labeling practices across platforms, scrutinizing whether transparency measures are sufficient or merely symbolic.

The feature is now rolling out gradually to eligible creators in the United States and select markets, marking a significant shift in how digital content can be produced and consumed on social media platforms.

-1.webp&w=3840&q=70)